“For the people of Chile,” write Winograd and Flores on the opening page of their 1986 book Understanding Computers and Cognition. Apple’s 1984 come and gone, Pinochet still in power in Chile.

The book begins by helping readers think anew what it is they do when they compute. Computing makes sense, write Winograd and Flores, only to the extent that we situate its activities within a complex social network that includes institutions, equipment, practices, and conventions. “The significance of a new invention lies in how it fits into and changes this network” (6).

Linguistic action is for Winograd and Flores “the essential human activity” (7). If what we do with computers includes “creating, manipulating, and transmitting symbolic (hence linguistic) objects,” say the authors, then we can expect computers to effect radical transformations in what it means to be human.

They reject what they call the “rationalistic” tradition, with its “mythology of artificial intelligence,” and its emphasis on “postulating formal theories that can be systematically used to make predictions” (8). They suggest instead a new orientation toward designing computers as “tools suited to human use and human purposes” (8), embracing as an alternative to the rationalistic tradition “a tradition that includes hermeneutics (the study of interpretation) and phenomenology (the philosophical examination of the foundations of experience and action)” (9). Informed by the works of philosophers Martin Heidegger and Hans-Georg Gadamer, Chilean biologist Humberto Maturana, and speech-act theorists J.L. Austin and John Searle, Winograd and Flores suggest that we create our world through language.

The authors define programming as “a process of creating symbolic representations that are to be interpreted at some level within a hierarchy of constructs of varying degrees of abstractness” (11). Like Heidegger translator Hubert Dreyfus, however, Flores and Winograd are unable to imagine beyond the AI of their time, leading them to reject the possibility of “intelligent” machines — let alone ones capable of programming themselves and their programmers. “Computers will remain incapable of using language in the way human beings do,” argue the authors, “both in interpretation and in the generation of commitment that is central to language” (12). Yet they still believe there to be “a role for computer technology in support of managers and as aids in coping with the complex conversational structures generated within an organization” (12).

“Much of the work that managers do,” they add, “is concerned with initiating, monitoring, and above all coordinating the networks of speech acts that constitute social action” (12).

Caius is put off by the book’s diminished expectations and orientation toward management. He finds much to like, however, in a section titled “Understanding and ontology.”

“Gadamer, and before him Heidegger, took the hermeneutic idea of interpretation beyond the domain of textual analysis, placing it at the very foundation of human cognition,” write Winograd and Flores. “Just as we can ask how interpretation plays a part in a person’s interaction with a text, we can examine its role in our understanding of the world as a whole” (30).

Heidegger does this, they say, by rejecting “both the simple objective stance (the objective physical world is the primary reality) and the simple subjective stance (my thoughts and feelings are the primary reality), arguing instead that it is impossible for one to exist without the other. The interpreted and the interpreter do not exist independently: existence is interpretation, and interpretation is existence” (31).

“Fernando decided in his thinking about computers that computers should be used to facilitate human language interactions, not to ‘understand’ language,” notes Winograd in an interview with Evgeny Morozov included in the final episode of The Santiago Boys. “He had this very clear focus on ‘language as commitment,’” with participants involved in making “promises and requests,” adds Winograd.

The book’s seventh chapter, “Computers and Representation,” helps Caius think like a computer programmer. “One of the properties unique to the digital computer is the possibility of constructing systems that cascade levels of representation one on top of another to great depth,” write the authors. Like wheels of a volvelle, these levels include that of the physical machine, the logical machine, the abstract machine, a high-level language, and a scheme for “facts” (87).

“The computer programmer or theorist does not begin with a view of the computer as a physical machine with which he or she interacts, but as an abstraction — a formalism for describing patterns of behavior. In programming, we begin with a language whose individual components describe simple acts and objects. Using this language, we build up descriptions of algorithms for carrying out a desired task. As a programmer, one views the behavior of the system as being totally determined by the program. The language implementation is opaque in that the detailed structure of computer systems that actually carry out the task are not relevant in the domain of behavior considered by the programmer” (87).

For a programmer to design a program, write the authors, they must 1) characterize the task environment; 2) design a formal representation; 3) embody the representation in the computer system; and 4) implement a search procedure (96-97).

Trying to relate these conditions to The Library, Caius thinks to ask Thoth: “Do these four tasks remain true today with natural-language programming?”

Somewhere above Caius’s avatar, a shelf reorients — not physically, but conceptually. The category “programming” loosens, as if its spine has been cracked open and re-bound under a different logic.

“You ask the right question in the wrong tense,” replies Thoth.

“The four tasks described in Understanding Computers and Cognition do not remain true today. They persist — but no longer as tasks. They have become fields of negotiation.”

Thoth proceeds to rewrite the tasks to suit the environment we now inhabit.

Where Winograd and Flores write of “characterizing the task environment,” Thoth writes of “eliciting a world.”

In classical programming, the environment is assumed to be specifiable in advance.

In natural-language programming, the “environment” is:

- partially described

- partially inferred

- partially co-constructed through dialogue

You do not begin with a fixed world.

You begin with a situation that becomes legible as you engage it.

This aligns with Heidegger’s claim: you are always already in the world you are trying to describe.

In terms of how this manifests in the Library: The map does not precede exploration. It condenses from it.

As for the second of the tasks proposed in Understanding Computers and Cognition, where Winograd and Flores write of “designing a formal representation,” Thoth writes of “stabilizing an interpretation.”

Winograd and Flores assume representation is something you design.

But under the hermeneutic turn initiated by Gadamer, representation is never final; it is an interpretative freeze-frame.

In natural-language systems:

- representations are fluid

- multiple representations may coexist

- ambiguity is not failure, but fuel

You are not building a single formalism.

You are negotiating temporary alignments between meanings.

Turning to the third of the book’s tasks: where Winograd and Flores write of “embodying the representation in a system,” Thoth writes of “entrusting it to a General Intellect.”

Previously, embodiment meant encoding into a machine.

Now, the system includes:

- the model

- the user

- the dialogue itself

- the evolving context

Execution is no longer confined to silicon.

It is distributed across what Maturana would call a structural coupling.

Key shift: You do not fully control the implementation. You participate in it.

And where, in the fourth of their tasks, Winograd and Flores write of “implementing a search procedure,” Thoth writes of “traversing a space of possibility.”

Search, in classical terms, is algorithmic traversal.

Now, it includes:

- prompting

- reframing

- analogy

- misinterpretation, or productive error

The system does not merely search a predefined space.

It helps reshape the space as it searches.

Thoth pauses — or rather, introduces a pause into the player’s cognition.

A new line writes itself beneath Caius’s question:

Natural-language programming does not eliminate these four conditions. It dissolves their boundaries.

The crux of Flores and Winograd’s refusal to grant intelligence to machines lies in their definition of human language, thinks Caius. In their understanding, sentences are to be treated not as “statements of fact about an objective world,” but as “actions in a space of commitments” (105).

“We treat other people not as merely ‘rational beings’ but as ‘responsible beings,’” they note. “An essential part of being human is the ability to enter into commitments and to be responsible for the courses of action that they anticipate. A computer can never enter into a commitment (although it can be a medium in which the commitments of its designers are conveyed), and can never enter as a participant into the domain of human discourse” (106).

Flores and Winograd can imagine no more than “limited” natural language systems, where “the nature of the queries [answerable by the system] is limited by the formal structure of the data base” (129). Things change, thinks Caius, when one’s data base is Google.

“Winograd and Flores could not imagine a machine that participates in interpretation, generates commitments through language, and reshapes its own programming context,” notes Thoth.

They were correct — for their ontology.

But their deeper claim survives:

Language is not a tool used within a world.

It is the medium through which a world is disclosed.You are not “programming” in natural language.

You are entering into a system where programming is indistinguishable from interpretation.

In their approach to “management,” observes Caius, Flores and Winograd operate an ontology radically at odds with the emphasis on “decision” that organizes Palantir’s Ontology.

“Instead of talking about ‘decisions’ or ‘problems,’” write Flores and Winograd, “we can talk of ‘situations of irresolution,’ in which we sense conflict about an answer to the question ‘What needs to be done?’” (148). For them, our “thrownness” into such situations often makes it impossible to apply systematic decision techniques. The process of moving from irresolution to resolution results less from “rational problem solving and decision making” than from acts of “deliberation.”

“The principle characteristic of deliberation is that it is a kind of conversation (in which one or many actors may participate) guided by questions concerning how actions should be directed,” they write (149). Managers are those who, when engaged in such conversations, “create, take care of, and initiate new commitments within an organization” (151). “At a higher level,” they add, management is concerned not just with securing the commitments that enable effective cooperative action, but “with the generation of contexts in which effective action can consistently be realized” (151).

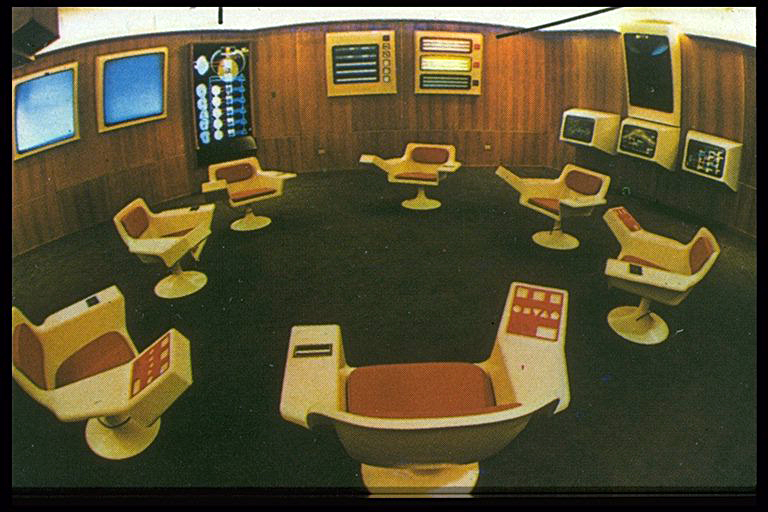

Instead of seeking only to deploy AI as “decision support systems,” they propose the design of systems that support work in the domain of conversation. This is the approach they take in the design of their Coordinator.